Thank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

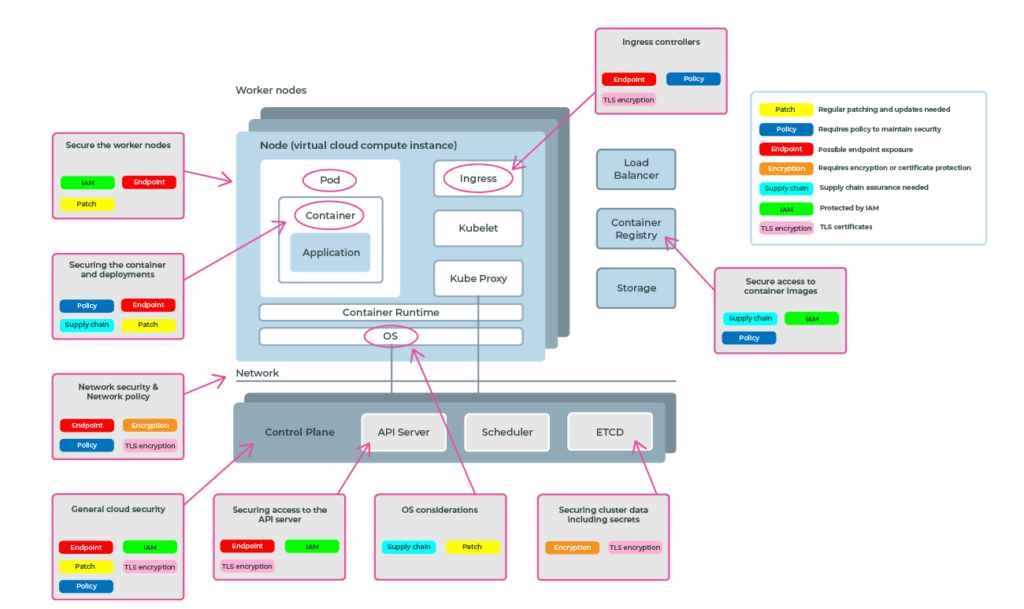

Welcome to this overview of key security considerations for utilising public cloud Kubernetes as a platform for deploying applications. This discussion aims to provide a broad understanding of various security domains essential for effective deployment in this environment. While each area covered warrants deeper exploration, this overview offers a foundational understanding to guide your investment of time and resources.

Kubernetes and container technologies offer a streamlined approach to developing scalable applications. Despite their inherent complexity, they present a significant improvement over traditional, bespoke infrastructure models. However, establishing a robust security framework demands a comprehensive comprehension of these technologies. Any misconfiguration within this intricate ecosystem could potentially expose your organisation's sensitive data to vulnerabilities.

The API server provides the entry point specifically for the management of a Kubernetes cluster. The API server endpoint is secured through public cloud IAM and also Kubernetes RBAC - however, it is open to the internet if not configured correctly and hence presents an attack vector.

ETCD is a distributed data store that contains cluster data including Kubernetes secrets, a mechanism by which sensitive data is made available to containers such as user certificates, passwords, or API keys.

AKS, EKS and GKE all provide encryption at rest for ETCD to protect stored information, however, Kubernetes secrets by default are only base64 encoded (plaintext).

Typically the control plane in public cloud Kubernetes runs in a separate network to the worker nodes (where applications are running). As a consumer of the service, you have no access to the control plane network, which is managed and secured by the cloud provider.

The access point between the two networks must be considered when securing your clusters. Providers have different mechanisms for mitigating security gaps:

By default, all pods within a Kubernetes cluster can communicate freely with other pods. This presents a considerable threat of internal attacks from applications running within the cluster.

These threats should be mitigated through network security constructs that exist within the cluster. Network policies are Kubernetes resources that control the traffic between pods and/or network endpoints and control data ingress and egress between pods and namespaces.

Applications in a Kubernetes cluster run in containers within a virtual server called a node. That node has, amongst other things, a host operating system and container runtime (which runs the container). Both of these components require attention within your Kubernetes security posture as a breach in a host will compromise everything you are running there, including Kubernetes and the workloads inside.

The public cloud providers do a lot of work in mitigating security breaches at this level including running CIS benchmarks against the host OS and regularly patching and updating. However, as the end user, you still have responsibilities to make sure that you are adopting the cloud patches and upgrades.

The public cloud Kubernetes services allow a varying range of node options when creating clusters, from Windows to Linux hosts and also container runtime optimized operating systems. Choosing the right OS for your nodes includes understanding the ongoing security patching strategies.

“Be aware of differences in public cloud patching strategies”

Different cloud providers have different methods of patching and updating the OS and container runtimes in their offerings.

In general, if you are using “self-managed” VMs as hosts then you are responsible for patching and upgrading. If you are using provider-managed VMs then some or all of the patching and upgrading process is automatically applied.

There are several things you can do to harden self-provided node images, like removing package managers, having read only filesystems, etc. The best guidance will be with the OS provider on hardening images.

Joint responsibility is never more true than when working with node security and patching. You must be aware of the methodologies that your cloud provider uses for security patches and updates, and ensure that appropriate processes are in place to review and apply the right process to keep node security up to date.

Security around containers and container workloads is a large subject with many implications that is not in the scope of this article. However, you should be aware of the areas that need to be addressed with regard to protecting systems and data.

One important area to think about is the safety of the supply chain.

Securing container workloads starts with how the container interfaces with the container runtime and hence the underlying worker node and networks. Containers pose a number of security concerns that must be addressed through the application of policy-based protection.

Application containers must be created to only expose the services and components needed to do the role of the container. Moreover, policies must be in place to deny certain aspects of container runtime interactions such as:

The list above is just a selection to give a view of the importance of container security and where policy needs to be created to protect and secure.

Pod Security Policy was deprecated from Kubernetes in 1.21 and will be removed completely in 1.25 (April 2022). Pod Security Policy was a core feature used to define pod and OS level security restriction policies described above. Be aware if your existing solutions make use of this. Take a look at these blogs for more information on the rationale behind this and alternative tools:

PodSecurityPolicy is dead. Long live...?

How to use the security profiles operator

An area that has been spawned from the ubiquity of containers is in Software Supply Chain security. A feature of container packaging format enables software to be decomposed into “layers” which may come from other sources such as 3rd party software providers. The layered software approach presents challenges in identifying what is contained in the software “layers” and what security controls mitigations have been applied.

Moreover, base container images can be sourced from public repositories where the content of the software layers is unknown with a high potential for malicious software embedded.

Care must be taken in your software delivery process to ensure that container images used in development have come from trusted sources and have been image scanned to ensure that versions of software used within the container have the latest patches and vulnerable image layers identified.

One important area to consider is the internal container build identification. Version numbers as labels are good for identification but not enough to ensure that what you have in the container is what you are expecting. Take a look at using the SHA checksum to ensure that the image is the same as expected. Also, take a look at image signing which gives even greater confidence that the image is exactly the same as when it was signed.

Supply chain issues are discussed further in this blog:

Tutorial: Keyless sign and verify your container images with cosign

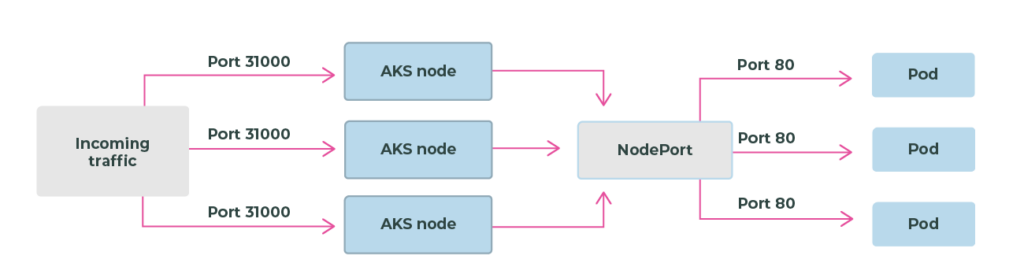

Public cloud Kubernetes applications that need to expose public endpoints have a few options. Typically these fall into three areas:

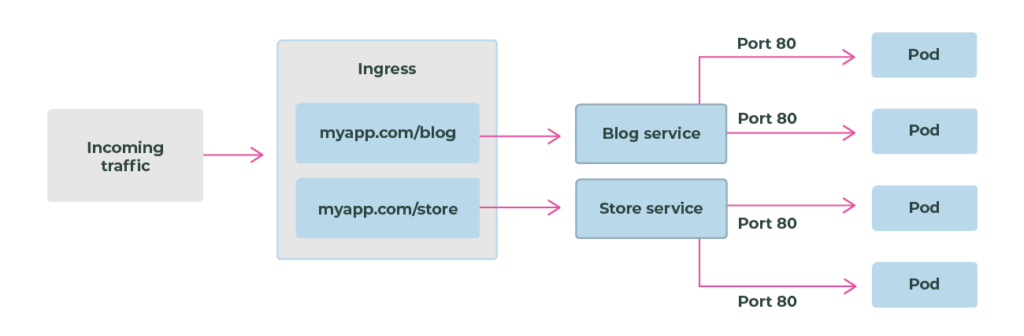

Kubernetes ingress is configured with two objects: the ingress controller and the ingress object.

An ingress controller is a piece of software that provides reverse proxy, configurable traffic routing, and TLS termination for Kubernetes services. Kubernetes ingress resources are used to configure the ingress rules and routes for individual Kubernetes services. Using an ingress controller and ingress rules, a single IP address can be used to route traffic to multiple services in a Kubernetes cluster.

Creates a port mapping on the underlying node that allows the application to be accessed directly with the node IP address and port.

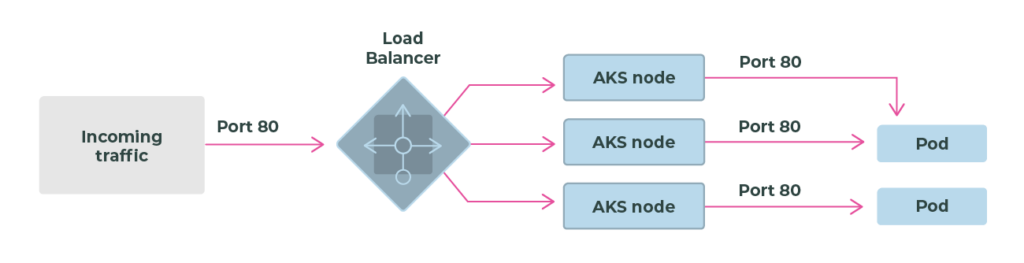

Uses a load balancer resource that has an external IP address that connects to the requested pods. To allow customers' traffic to reach the application, load balancing rules are created on the desired ports.

The public cloud Kubernetes providers generally hand the security concerns with ingress back to the user. It is up to you to configure ingress controllers, certificates and policy to secure access into your services from the outside. Sufficient policy and process should be in place to identify misconfigured ingress that could pose a security threat.

Take a look at this blog post which talks about ingress in EKS with TLS:

Tutorial: How to expose Kubernetes services on EKS with DNS and TLS

By default, Kubernetes can pull container images defined in your Pod specifications from any destination, as long as it's reachable from the Node. This introduces the risk that a user (authorised or not) could accidentally or maliciously specify an image to run from an untrusted source, which may cause direct harm to the platform and neighbouring services, resulting in data exfiltration, and increased hosting and auditing costs.

Employing cluster-level image policy controls significantly reduces this risk and can be achieved via the use of custom admission controllers (i.e. OpenPolicyAgent Gatekeeper).

What to do:

Take a look at the following blog that talks about verifying the container supply chain. Appvia Wayfinder also implements a custom admission controller which provides policy enforcement of trusted repositories:

5 things to improve your Kubernetes security posture

Hopefully this has given a reasonable high-level account of most of the security areas that you will need to be aware of when thinking about public cloud Kubernetes as a tool to deliver applications. Each of the areas I have mentioned has much more detailed levels of what and how to secure, but you should now have an appreciation of the scope of where to invest your time.

Kubernetes and containers are a great set of technologies to make building scalable applications in a simpler, more standardised way. Yes, it is still complex, but a lot less complex than building snowflake infrastructure and architectures of the past. Devising a well-thought-out security posture requires you to understand the moving parts as misconfiguration of any of these may lead to vulnerabilities to your organisation’s data.

Here at Appvia we have products and services that focus on providing security guardrails and policy-based compliance for public cloud Kubernetes. Appvia Wayfinder brings you peace of mind and compliance in delivering on your security concerns.