Fast Facts

Analysis that used to take this organisation 20 days to accomplish can now be completed in half an hour.

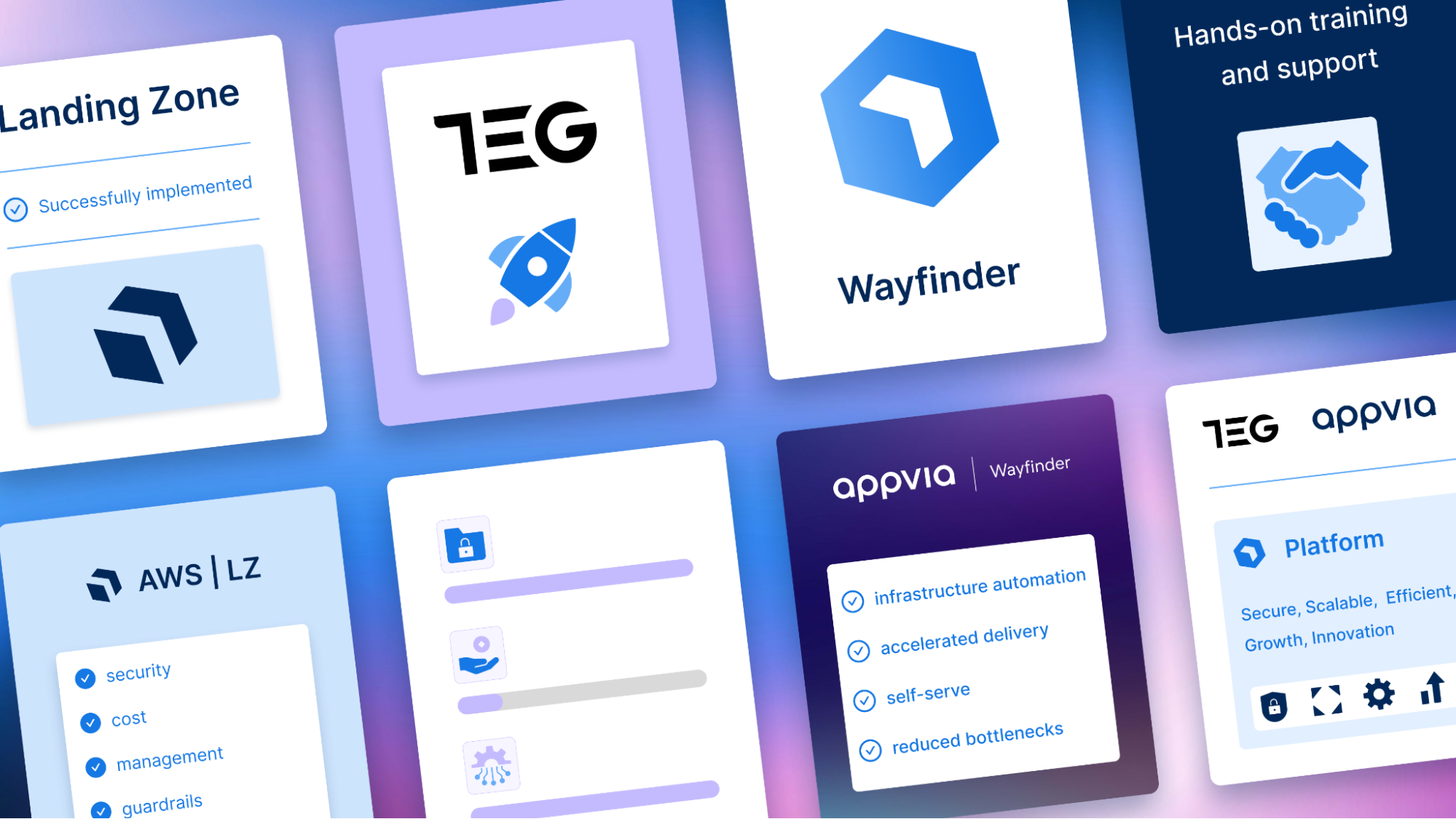

We assisted this organisation by implementing four key factors: a secure landing zone, an on-boarding mechanism for applications, containerisation and training.

Overview

CGI’s ETP had been developing a machine learning platform, Lovelace, with monitoring and logging capabilities, to simplify the deployment of machine learning workloads and enhance overall developer experience.

The small internal team of Machine Learning Engineers was developing on an AWS EKS cluster created with self-managed CloudFormation templates. These could not be used to provision other cloud-managed Kubernetes clusters (i.e. Azure Kubernetes Service, AKS, or GKE).

This would significantly limit their customers. They required a secure, scalable and cost-efficient solution, to support the deployment of their platform and workloads across all major public cloud providers.

Building this capability in-house, altering their existing implementation to multi-cloud deployment, would call for significant engineering overhead and time. This involves time consuming tasks such as translating cloud provider-specific services, like CloudFormation templates, across each major cloud provider. As a trusted partner, CGI called on Appvia’s cloud-native expertise for support and guidance to provide a cost-effective and scalable solution.

Appvia Wayfinder reduced the lead time of deploying secure applications across multiple clouds from 1 week to 1 hour, enabled developers to maintain their focus on building applications, with the peace of mind that their Kubernetes clusters are well-managed and adhere to security best practices.

The challenge

Limitations to achieving secure, multi-cloud Kubernetes Management

The machine learning platform, Lovelace, extends on well-supported open source products that run as Kubernetes workloads, in the cloud or on premise. It is offered to customers as a service to provision, upgrade and maintain the underlying infrastructure, or it can be installed directly on the customer’s target environment.

The platform provides value to their customers with one caveat: It was developed on Amazon Web Services (AWS) and could only be provisioned on AWS EKS clusters.

High-level architecture of Lovelace

Achieving multi-cloud

Challenge: Risk of misconfiguration

If Kubernetes clusters aren’t managed in an ‘elegant’ way, it can lead to misconfiguration. Using cloud provider native tooling such as CloudFormation meant that there was no way of scaling infrastructure beyond AWS.

Solution: Automated cluster provisioning management

Appvia Wayfinder eliminates the investment of multi-cloud implementation by abstracting specific cloud provider options that are not transferable between providers. Clusters and namespaces can be self-served with automated best-practices and integrations that support on-demand auto-scaling.

Implementing best-practice security

Challenge: Leaving the environment open to vulnerabilities

If the Kubernetes version within the clusters is outdated, there’s a risk of leaving the environment open to vulnerabilities and attacks.

Solution: Automated policy administration and security hardening

Appvia Wayfinder automates the highest security standards across Kubernetes clusters, including least-privilege and time-based access. In meeting all of CGI’s security requirements and achieving benchmark compliance, nothing is left to human error.In-cluster security controls are also in place to ensure that applications and workloads, hosted within a Wayfinder cluster, adhere to best practices via pre-defined pod security. For example, the restriction of using privileged containers and traffic flow controls to workloads using network policies.

Reducing operational overhead

Challenge: Installing and managing platform components leads to operational overhead

The Lovelace platform requires manual configuration and set-up for TLS and DNS, as well as open source tooling to operate. This can lead to an operational overhead, as upgrades of such open source tooling would require constant evaluation to ensure that the platform is secured from potential vulnerabilities identified by the community. In addition to this, compatibility across TLS, DNS and Ingress must also be observed.

Solution: Automated configuration of integral platform components

Appvia Wayfinder equips its users with the option to automatically provision open source application services alongside every Kubernetes cluster out of the box such as ExternalDNS, Cert-Manager and Nginx ingress controller. This requires zero operational overhead, creating a user-centric process where CGI can create the resources that they need, as they need them

The solution

Wayfinder provides CGI’s ETP with a consolidated and rich user interface that simplifies the creation and maintenance of secure Kubernetes clusters across GKE, AKS and EKS.

With Appvia Wayfinder obscuring the complexity of cluster management, CGI is enabled to host Lovelace effectively in the cloud in 1 hour instead of 1 week. Their team of engineers can remain focused on developing applications whilst adhering to security best practices with its built in policy administration and security hardening, with cluster management now being fast, secure and scalable.

Appvia Wayfinder enables CGI to overcome the day 2 concerns and challenges around application and platform operations.

1. Centralised cluster management

2. Policy administration / compliance requirements

3. API events emission for onwards integration into a logging solution

4. Exposed prometheus metrics for monitoring the health of the product

5. Automated patches, updates and upgrades for Kubernetes, operating systems and networking protocols

6. Application deployments at scale across multiple clusters with GitOps integration